Special thanks to Zeo, DAOctor, Zhengyu, Christina for contribution, review, and feedback.

Building structural databases of knowledge and better visualization of knowledge are important tasks to advance computer science, AI, and the Web. Before crypto and the world of decentralized applications emerged, old Web 3.0 research was mostly focused on building knowledge base and knowledge graphs, as well as representation / reasoning based on these structures (the Semantic Web).

There are two general approaches to build knowledge bases. One way is to fetch data from the Web as well as other data sources, then organize them into a desired database of knowledge (mostly a huge collection of “triples”, or “graphs”, then perform “higher-order logics” or machine learning techniques upon the structures for reasoning and other intelligent tasks). The other way is to rely on human intelligence to build a database collaboratively (e.g., Wikipedia, ConceptNet, or Citizen Science projects that we will discuss more in detail later).

This article will first review some relevant innovations from the past decades, and then discuss how we can move forward and build a high-level knowledge database with collective intelligence and sustainable incentive mechanisms.

Knowledge Base, Knowledge Graphs, and Wikipedia

For a pretty long time, people were interested in creating knowledge graphs, mainly for two reasons:

- Connect the dots of all information and knowledge humans have created, and

- Perform reasoning and machine learning techniques on the knowledge graph to produce better AI, and use the system to improve user experience of Web2 products.

Now, it’s pretty clear that useful knowledge graphs were mostly created as underlying tools of megacorporations in Web2. For example, the Facebook knowledge graph helped better social network search, the Google knowledge graph helped present related information . Since everything is close-source, we don’t know how exactly the knowledge graphs were built, but from the UI certainly those knowledge graphs are helpful to improve their user experience.

The effort of the Wikipedia community is amazing. It is one of the first attempts to demonstrate the power of Internet communities. On the other hand, open databases are available as Internet public goods. One example is DBpedia, a database that provides APIs to applications that want to harness the Wikipedia knowledge base. Another example is ConceptNet, a freely-available semantic network that helps AI and NLP programs to fetch common semantics.

However, there are some fundamental limits to how much these Internet public good organizations can do. Wikipedia relies on donations every year, and it operates within a 501(c)3 organization, on which it is hard to impose more advanced incentive mechanisms, and build more cool infrastructures based on the network of knowledge. Same is true in the case of DBpedia and ConceptNet, etc. As non-profit organizations, these public good efforts find it hard to go deeper to build up a community that keeps building the infrastructure and eventually forms an ecosystem. I built a Wikipedia graph visualization & search tool when I was in college using DBpedia’s API. However, it was much more difficult to join a vibrant community at that time. Now in the crypto community, things are much different, developers who have great ideas can participate in a lot more activities, team up, and get supported by the multi-chain ecosystems.

However, I’m not suggesting building another Wikipedia (a.k.a. DAO-ify Wikipedia, or “Web3 Wikipedia”), because despite the limitations of the current non-profit organization model, the content on Wikipedia website is already well curated, the structure is well set, and people are largely benefiting from the fruit of it already. In general, Wikipedia is good at storing descriptions of knowledge, and with the Web1 & Web2 infrastructures, we have already made knowledge searchable. What Wikipedia together with the existing Web infrastructures are not good at, is to present “human understanding” of knowledge – the structural knowledge in the human brain. In order to present such information, human curation and human collaboration are the core, and this is something not well supported by Web1/Web2 infrastructure, but is going to be achievable with Web3 infrastructures and coordination mechanisms

**Notably, there were efforts to build massive structural databases to enhance machine understanding of knowledge. For example, companies like Cyc have been trying for decades to build a common sense knowledge base to help machines mimic human brains. These companies eventually turned themselves into commercial software companies, because strong AI obviously requires much more than a knowledge base of nodes and relations. In contrast to building a structural knowledge base for machines, it’s the human understanding of knowledge and human curation that matter here – building knowledge base of human understanding to help more humans understand.

On the other hand, it is worth thinking about how we can add higher-level semantics to the current Web of knowledge, which is the structural knowledge we describe in this article.

Citizen Science and Volunteer Computing

Another branch of exploration I want to mention is citizen science and volunteer computing. In the early 2010s, there were many exciting projects from the scientific community that leveraged the intelligence of the crowd to accelerate the progress of research and scientific discovery. There were in general two types of such efforts. The first type is called Volunteer Computing, which distributes computing tasks to a crowd of personal computing devices (e.g. LHC@Home, SETI@Home). The second type is called citizen science, which creates repetitive tasks (not a bad word here!) that everyone can perform. The project collects data (sometimes analytical results) from the crowd of contributors and feeds them into some research projects to create meaningful results (e.g. projects listed on Citizen Cyberlab, SciStarter, or in the machine learning community, tagging pictures to enrich training data can be crowdsourced). Think about these efforts as “DAOs” without inventing the word “DAO”, the coordination aspect of decentralized communities is nothing new!

Many of the projects were successful, but unfortunately the sustainability of those projects is, again, limited. SETI@Home is no longer operating, and many citizen science projects could have lasted longer but didn’t. Incentive and ecosystem are two aspects that are important to any collaborative efforts. Without an ecosystem, innovation is constrained. Without a sustainable incentive mechanism, there won’t be a vibrant community, therefore an ecosystem will never emerge.

Structure of Complex Concepts and Knowledge

Now let’s think about what high-level concepts and knowledge look like. From intuition, when we “understand” some concept, we actually understand quite a lot of details of the concept. There are two ways we can think of the process of “understanding”:

1. Understanding through tree-like structures

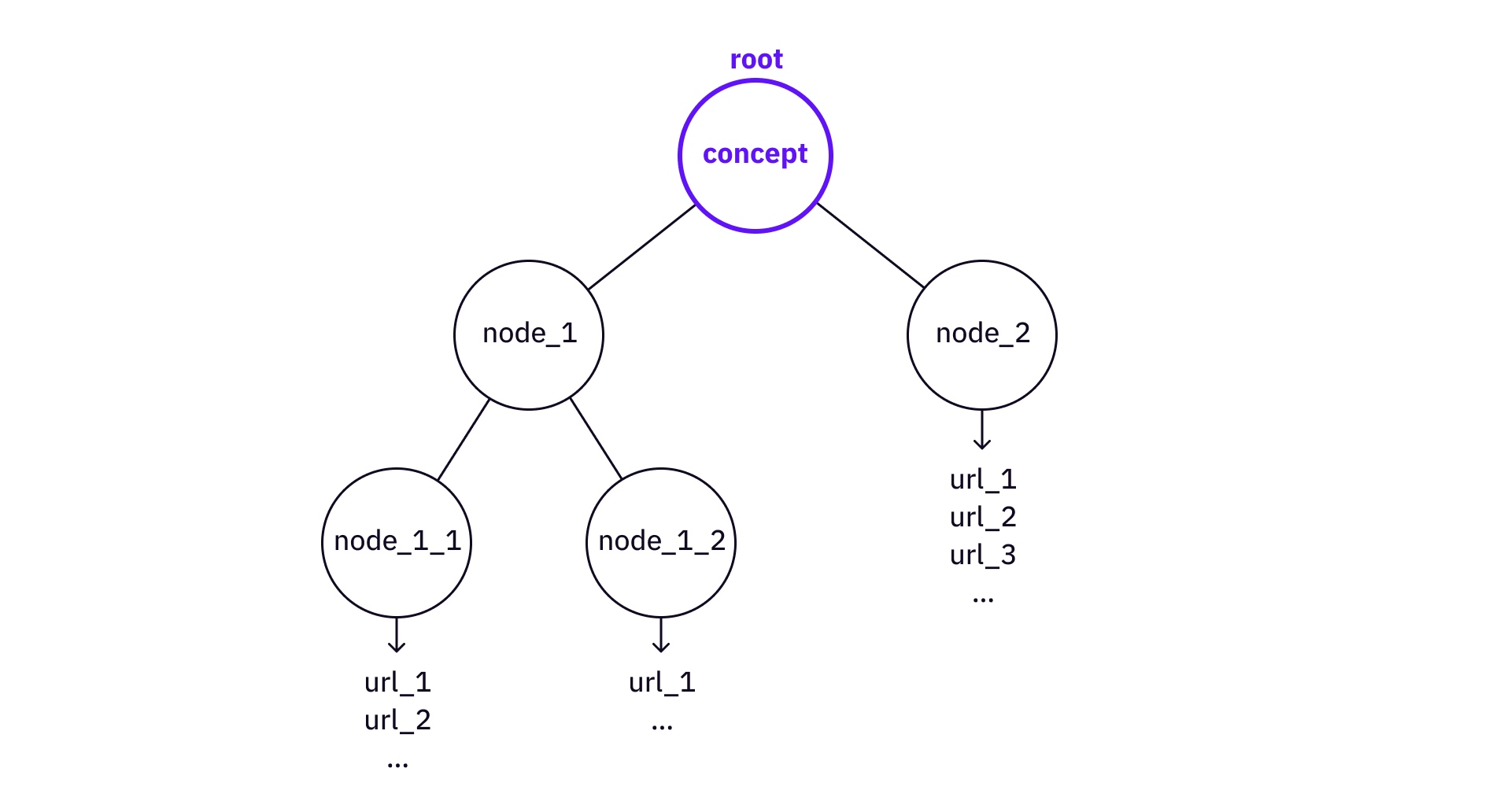

When we try to “understand” something, or say, “learn” something, we break it down into a tree structure. For example, if we want to understand some concept like “Merkle tree”, we have to understand some sub-concepts like “cryptographic hash functions” and “tree data structures”, which requires us to further understand basic concepts like “hash function”, “collision resistance”, etc..

The deeper the tree is broken down, the more primitive the concepts are. At one point, there will be some very straightforward resources available on the Web that can be directly referenced (e.g. Wikipedia page or some article / video).

We can find some similar ideas from the old-time AI. The K-Line theory suggests that our memory and knowledge are stored in tree structure (the P-nodes and K-nodes). Although there is a lack of actual evidence of such structure actually existing in our brains, the model has the power to explain how human memory and human brain work, and a tree structure is indeed the most succinct form of storage of structural knowledge.

There are two directions we can use tree structures for knowledge storage and understanding – breakdown and build-up.

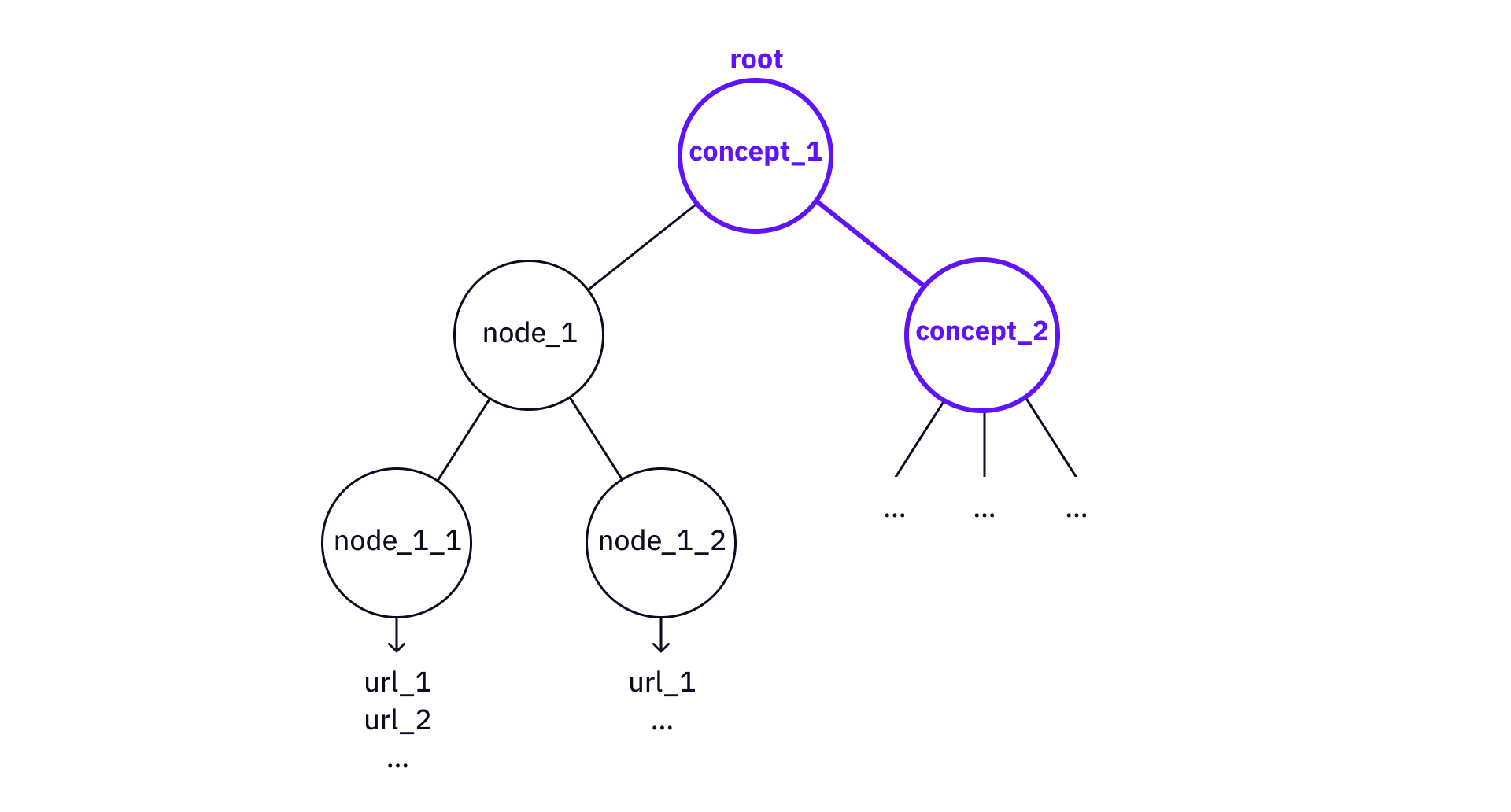

If we want to retrieve details, we break down a knowledge tree. On the other hand, if we have a knowledge tree, we can use the tree to build up to bigger trees (a.k.a. higher abstractions of knowledge and understanding).

In the case of “build-up”, a “Merkle tree” tree can be used as a node to construct more complex knowledge trees like “Verkle tree”, or “Merkle multi-proof”.

Notably, the key point here is the structure of the tree. A knowledge tree goes from its root concept to its leaves, pointing to all necessary references to existing Web resources. The relation between the nodes is not important here (unlike the “triple” idea in knowledge graph systems).

2. Understanding through “related knowledge”

We also obtain a deeper understanding of knowledge by adding more “context”. As Weigenstain famously said, “but what is the meaning of the word ‘five’? No such thing was in question here, only how the word ‘five’ is used”. The idea behind it is that the meaning of something really depends on other concepts related to it, and collectively they decide the meaning of something. By adding more context (a.k.a. related knowledge to the knowledge itself), we can understand the knowledge in more “depth”.

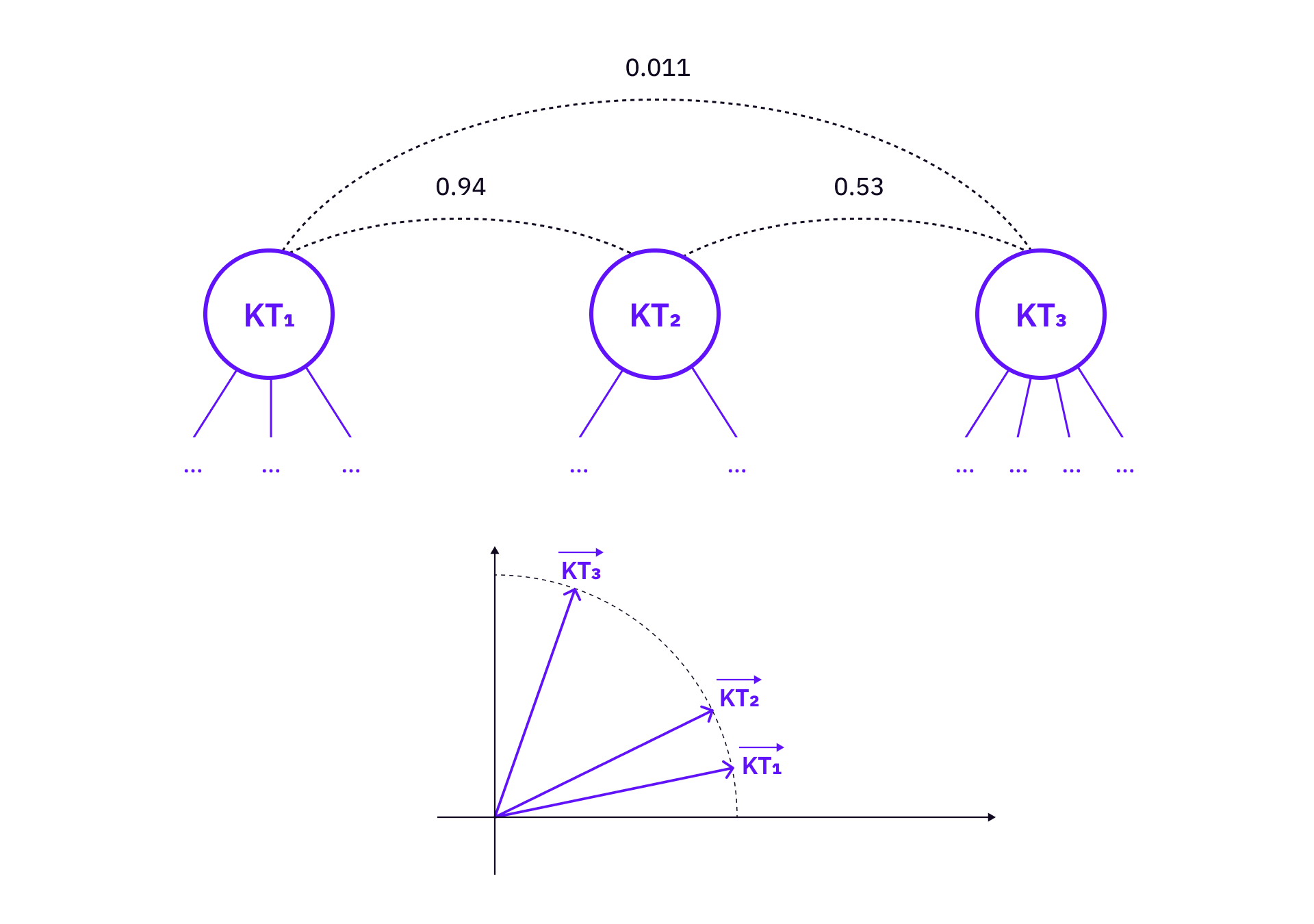

In general, it is easier for people to understand trees, not graphs. Instead of constructing a knowledge graph, we can think of the “related knowledge” in a more practical way – a set of knowledge trees with linked roots and nodes, essentially forming a knowledge forest.

The knowledge forest can be constructed as a database of many knowledge trees (planted in parallel). There are two basic operations we can perform on the database.

- Build linkage between different trees. It will be useful when we visualize the knowledge trees.

- The characteristics of knowledge trees can be constructed as vectors in some vector space. The vectors can then be used to relate knowledge trees that are conceptually related but not directly linked via (1).

On Depth of Understanding

In general, people have different levels of understanding towards the same concept. For some people, the concept of Merkle tree is straightforward enough and needs no further breakdown (their brain has encapsulated the concept into some common sense), while other people do not have enough information to understand the “Merkle tree” concept and might need a further breakdown.

Therefore, the knowledge trees do not have to be exclusive to each other, meaning that there might be overlaps between different trees. There might be trees explaining rudimentary concepts, and trees constructed for advanced concepts.

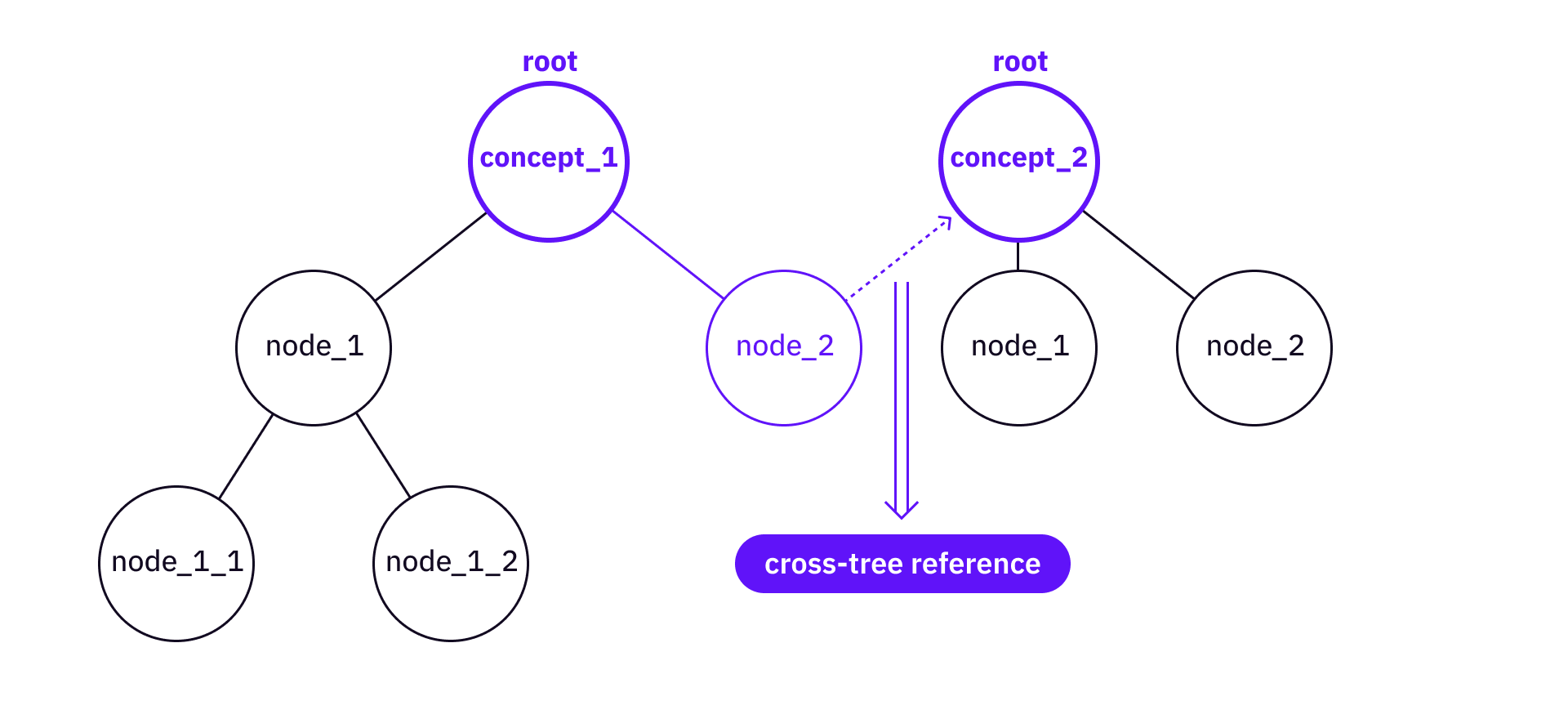

The overlaps might create redundancy among the trees. To reduce redundancy, we can introduce the following operations:

- cross-tree reference (dashed link) - create a link that connects a node from one tree to another tree’s root.

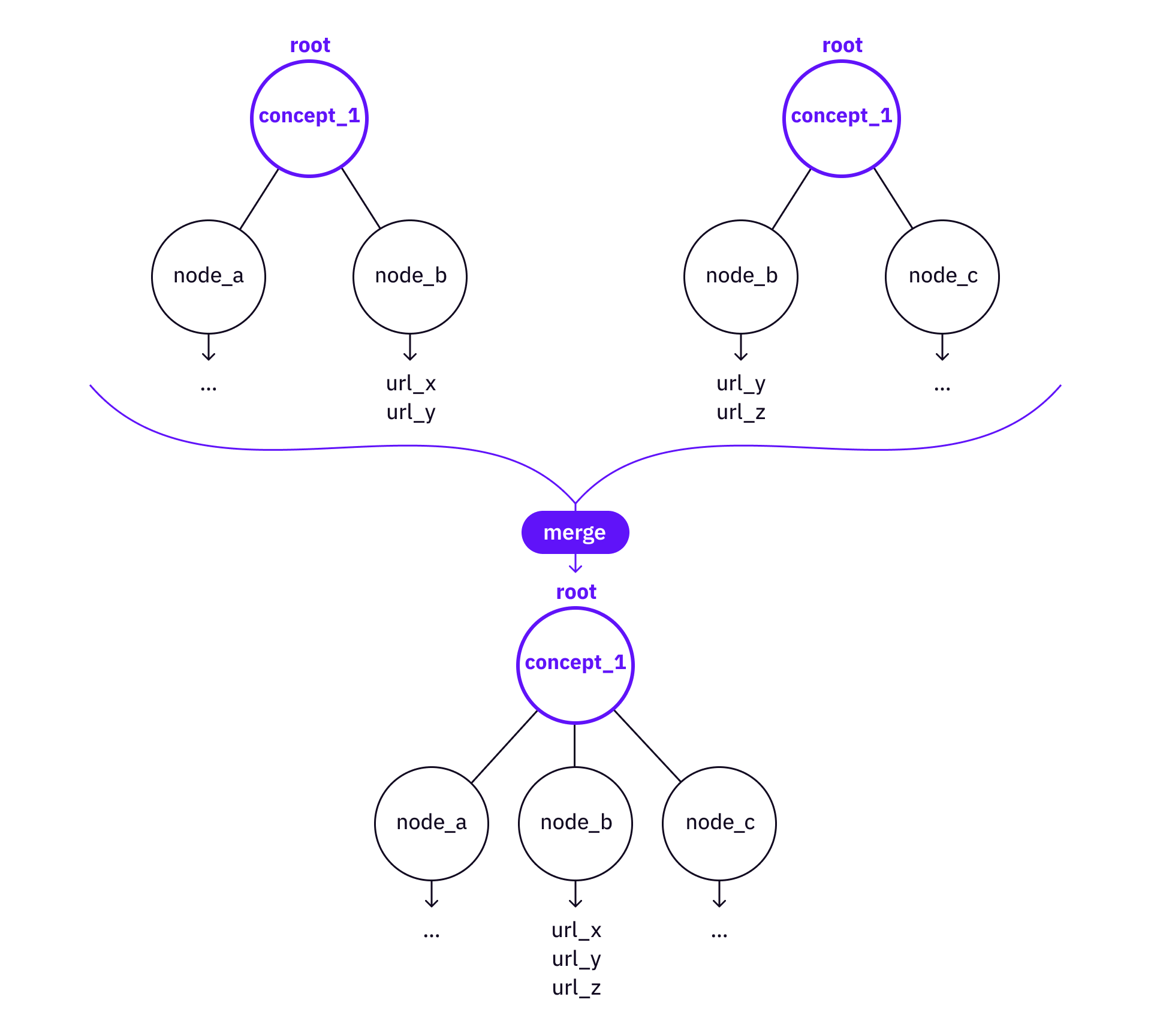

- merge - there might already be sub-trees under nodes from both trees, then it might be worth merging the information from the more advanced tree to the more rudimentary tree, if there are some valuable nodes, leaves and references the rudimentary tree has not yet covered.

Knowledge Tree and Meta Operations

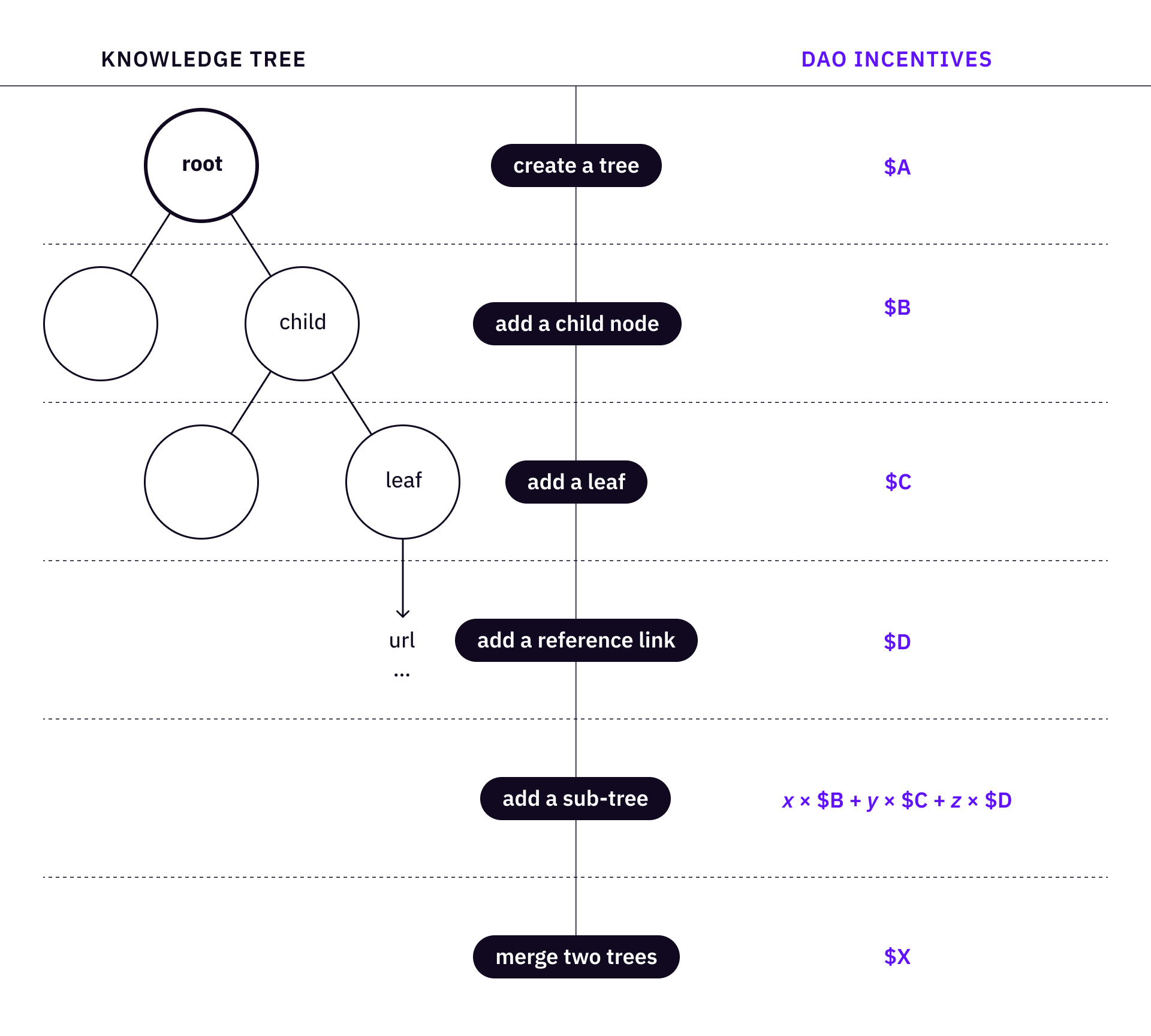

A single knowledge tree consists of a root, a set of child nodes, and a set of leaves, organized into a tree structure. We can then define a set of basic operations to create and refine a tree.

- Create a root (a tree)

- Add a child node

- Add a leaf to a node

- Add a reference link to a leaf

Then we can define a series of high-level operations for actual users to “plant” a tree and contribute to a tree.

- Add a sub-tree – introducing a child node that is necessary to the knowledge tree with complete nodes and leaves

- Merge two trees of the same concept

Knowledge Forest

When plenty of knowledge trees are planted, we have a knowledge forest!

A knowledge forest is a large group of knowledge trees planted together. One interesting fact about the knowledge forest is that there can be entanglement between the trees. In theory, the connection between different nodes and leaves can be arbitrary (e.g., a link between a leaf of one tree and a root of another tree). Effectively, if we add dashed links, the knowledge forest “sort of” becomes a knowledge graph. However, it is the individual knowledge tree that matters.

For example, the dashed line represents a link between the MACI tree and the zk-Snark tree.

The leaves of knowledge trees connect to existing articles / videos / resources on the Web. Therefore, the layers above those leaves are the layers of structural information or understanding.

What we can do with the knowledge forest is completely open. Probably the most important thing we should think about is the ecosystem of a collaborative knowledge base from the beginning. There might be a lot of things that we want to do with a knowledge forest, name three examples here:

- Visualize the knowledge trees and the knowledge forest

- Navigate through the knowledge forest via dashed links

- Find clusters of knowledge trees

Build a DAO, not a Non-profit Organization

Non-profit organizations can make things happen, but DAOs can make things great. The idea here is to map the set of tree operations to a set of incentives. The more standardized the meta-operations are, the more scalable the DAO can coordinate its members.

In the case of knowledge trees, a contributor of the DAO can create a root (equal to “create / plant a tree”), add a knowledge path (“grow the tree”), and add reference links to tree leaves. An incentive mechanism creates a set of rules to reward those community contributors who take verifiable actions to plan and grow knowledge trees.

At the same time, a review committee (or a review community) is important for planning and quality control. The coordination and incentivization of a DAO have been experimented extensively (e.g., the DAOrayaki DAO), and similar structures can be implemented here.

Knowledge Forest vs. Knowledge Graph

When we learn new concepts and acquire knowledge, trees are easier to understand. For any specific topic, it’s intuitively easy for a human to understand the structure of knowledge in trees, because there is no loop in a tree, and if the depth of a tree is limited to a certain level, it is much easier for human brains to process and remember.

In addition, knowledge graph representations are limited in terms of representing fuzzy or vague connections between nodes of knowledge (same problem with the common sense knowledge representation).

It doesn’t mean knowledge trees are always better than knowledge graphs. When it comes to storytelling, a knowledge graph is much more useful than knowledge trees (e.g., a graph of all Greek mythologies). There are actually many existing tools (1 2) to build knowledge graphs, but I’m surprised that most of them are becoming SaaS companies.

There are tons of details that a team of BUIDLers who work on an actual implementation of knowledge trees and knowledge forest – the data structure, product design, contribution & incentive details, UI, etc.. Despite everything, if a knowledge forest is to be built, I feel that in general it should be built as a public good to organize knowledge and open it to all human beings in the world. But let’s see what the Dora community comes up with!

Conclusion

The idea is to build a new kind of knowledge base on top of the existing Web infrastructures (like Wikipedia and more), and make it available for all human beings so that the complexity of understanding abstract knowledge can be maximally reduced (routing through a knowledge graph like the Web or Wikipedia can be as complex as O(nlog(n)), but a tree of n nodes only has depth of log(n), making navigation much easier). Coordinating with contributors in a DAO and using advanced crypto-native incentives to ensure sustainability of the organization. The idea in this article is in no way complete, there is plenty of space for discussion and improvement, and there are a lot of engineering and product problems to be thought of if some team wants to turn it into a reality.